R/exams for Distance Learning: Resources and Experiences

Motivation

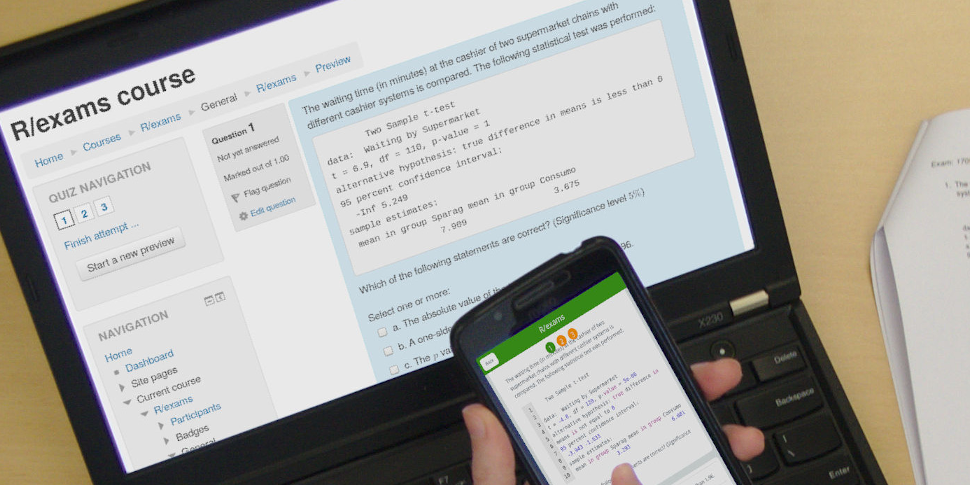

The COVID-19 pandemic forced many of us to switch abruptly from our usual teaching schedules to distance learning formats. Within days or a couple of weeks, it was necessary to deliver courses in live streams or screen casts, to develop new materials for giving students feedback online, and to move assessments to online formats. This led to a sudden increase in the interest in video conference software (like Zoom, BigBlueButton, …), learning management systems (like Moodle, Canvas, OpenOlat, Blackboard, ILIAS, …), and - last not least - our R/exams package. Due to the flexible “one-for-all” approach, it was relatively easy for R/exams users who had been using the system for in-class materials to transition to e-learning materials. But also many new R/exams users emerged that were interested in leveraging their university’s learning management system (often Moodle) for their classes (often related to statistics, data analysis, etc.).

As distance learning will continue to play an important role in most universities in the upcoming semester, I called for sharing experiences and resources related to R/exams. Here, I collect and summarize some of the feedback from the R/exams community. The full original threads can be found on Twitter and in the R-Forge forum, respectively.

- Twitter (@AchimZeileis): After a semester of #distancelearning is before the next semester of #distancelearning…

- R-Forge forum: Experiences with R/exams for distance learning.

Resources

Due to the increased interest in R/exams, I contributed various YouTube videos to help getting an overview of the system and to tackle specific tasks (creating exercises or online tests, summative exams, etc.). Also, the many questions asked on StackOverflow and the R-Forge forum led to numerous improvements in the software and support for various typical challenges.

- R/exams webinar @ WhyR?: YouTube video, blog post.

- Video tutorials: YouTube playlist, Achim Zeileis.

- Questions & answers: StackOverflow/r-exams, R-Forge forum.

But in addition to these materials that we developed, many R/exams users stepped up and also created really cool and useful materials. Especially from users in Spain came a lot of interest along with great contributions:

- Jonathan Tuke (YouTube video, in English): Online tests in Canvas..

- Julio Mulero (blog post, in Spanish): Online tests in Moodle.

- Estadística útil (YouTube videos, in Spanish): Package, Installation, First steps, Single choice, Written exams, Moodle exams.

- Pedro Luis Luque Calvo (YouTube video and blog, in Spanish): Online tests in Blackboard, blog post.

- Kenji Sato (blog posts, in Japanese or English): Part 1, Part 2, Part 3, bordered tables.

- Faisal Almalki (YouTube video and supporting materials, in Arabic): Online tests in Blackboard, slides, exercises.

At Universität Innsbruck

Not surprisingly, we had been using R/exams extensively in our teaching for a blended learning approach in a Mathematics 101 course (R/exams blog). The asynchronous parts of the course (screencasts, forum, self tests, and formative online tests) were already delivered through the university’s OpenOlat learning management system. Thus, we “just” had to switch the synchronous parts of the course (lecture, tutorials, and summative written exams) to distance learning formats:

| Learning | Feedback | Assessment | |

|---|---|---|---|

| Synchronous | Lecture Live stream |

Previously: Live quiz Now: Online test (+ Tutorial) |

Previously: Written exam Now: Online exam |

| Asynchronous | Textbook Screencast |

Self test (+ Forum) |

Online test |

The most challenging part was the summative online exam (R/exams blog) but overall everything worked out reasonably ok. One nice aspect was that other colleagues profited immediately from using a similar approach for their online exams as well. Like my colleague Lisa Lechner from the Department of Political Science:

Lisa Lechner: R/exams was a life-saver during the difficult semester we faced. I used it for the statistics lecture with approx. 300 students (final online exam, but also ARSnova questions) and for a smaller course on empirical methods in political science. But even before the pandemic, R/exams increased the fairness of my final exams and reduced the time spent on evaluating results. #bestRpackage2020

Replacing written exams

Finding a suitable replacement for the summative written exams was not only a challenge for us. One concern that came up several times was that learning management systems like Moodle might not be reliable enough for online exams, especially if students’ internet connections were flaky. Hence, one idea that was discussed, especially with Felix Schönbrodt and Arthur Charpentier, was to e-mail fillable PDF forms (StackOverflow Q&A) to students instead of relying entirely on Moodle (Twitter). However, I could convince them that PDF forms would create more problems than they solve (see the linked threads above for more details):

Felix Schönbrodt @nicebread303: We thought about doing a (pseudo-written) PDF-exam, but we are very glad that we followed @AchimZeileis advice to go for Moodle. Grading is so much easier!

Another concern is, of course, cheating, no matter which format the exams use. Many R/exams users recommended to use open-ended questions and/or more complex cloze questions forcing participants to interact with a certain randomized problem, e.g., based on a randomized data set. As Ulrike Grömping reports, this can also be combined with online proctoring for moderately-sized exams.

Ulrike Grömping (R-Forge): I have also run online exams via Moodle in real time, supervised in a video conference […]. And I have not used auto correction, but have asked questions that also require free text answers from students (text fields with arbitrary correct answer of (e.g.) 72 characters in a cloze exercise). Of course, the solutions support my manual correction process.

Other approaches to tackling this problem can be found in the R-Forge thread, see e.g., the contribution by Niels Smits.

Moodle vs. other learning management systems

While overall there is a lot of heterogeneity in the learning management systems that the R/exams community uses, Moodle is clearly the favorite. Moodle quizzes also have their quirks but they are surprisingly flexible and can be used at various levels.

Eric Lin @LinXEric: I use #rexams extensively, have been for years. I used to do paper exams. Now, with distance learning, I’m serving up exams, quizzes, entry ticket questions, exit ticket questions, all using a #rexams with #moodle.

Especially, the cloze question format is popular, allowing to combine multiple-choice, single-choice, numeric, and string items.

Rebecca Killick (R-Forge): We moved to using this exclusively for our undergraduate quizzes in the summer of 2019 and this served us well when COVID-19 hit. We are now expanding our online assessment using R/exams. We mainly use cloze questions with randomization.

See also the tweet by Emilio L. Cano @emilopezcano.

Of course, other learning management systems are also very powerful with Blackboard and Canvas probably being second and third in popularity. The OpenOlat system, also used at our university, is less popular but in various respects even more powerful than Moodle. The support of the QTI 2.1 standard (question and test interoperability) allows to specify a complete exam, including random selection of exercises, randomized order of questions, and flexible cloze questions.

Collaborating with colleagues

One design principle of R/exams is that each exercise is a separate file. The motivation behind this is to make it easy to distribute work on a question bank among a larger group before someone eventually compiles a final exam from this question bank. Hence it’s great to see that various R/exams users report that they work on the exercises together with their colleagues, e.g., Fernando Mayer @fernando_mayer on Twitter or:

Ilaria Prosdocimi @ilapros: used by me and colleagues in Venice for about 500 exams via Moodle (in different basic stats courses). Mostly single choice and numeric questions. After some initial learning it worked perfectly and made the whole exam writing much easier.

For facilitating their collaboration, the group around Fernando Mayer has even created a ShinyExams interface (in Portuguese).

Statistics & data science vs. other fields

It is not unexpected that the majority of the R/exams community employs the system for creating resources for teaching statistics, data science, or related topics. See the tweets and posts by Filip Děchtěrenko @FDechterenko, @BrettPresnell, Emi Tanaka @statsgen, Dianne Cook @visnut, and Stuart Lee. In many cases such courses are for students from other fields, such as pharmacy as reported by Francisco A. Ocaña @faolmath or biology as reported by:

@OwenPetchey: The Data Analysis in Biology course at University of Zurich, with 300 students this spring, used this fantastic resource to author its final exams, with upload to OLAT.

However, there is also a broad diversity of other fields/topics, e.g., international finance and macroeconomics (Kamal Romero @kamromero), applied economics and accounting (Eric Lin @LinXEric), econometrics (Francisco Goerlich), linguistics (Maria Paola Bissiri), soil mechanics (Wolfgang Fellin), or programming (John Tipton).

Going forward

All the additions, improvements, and bug fixes in R/exams that were added over the last month are currently only available in the development version of R/exams 2.4-0 on R-Forge. The plan is to also release this version to CRAN in the next weeks.

Another topic, that will likely become more relevant in the next months as well, is the creation of interactive self-study materials outside of learning-management systems, e.g., as part of an online tutorial. Debbie Yuster nicely describes such a scenario:

Debbie Yuster (R-Forge): I plan to use R/exams to maintain source files for “video quizzes” students will take after they watch lecture videos asynchronously in my Introduction to Data Science course. Instructors at different universities will be using these videos (created by the curriculum author), and I will distribute the quizzes to interested faculty, each of whom may have a different preferred delivery mode. I’ll be giving the quizzes through my LMS (Canvas), while others may administer the quizzes in learnr, or use a different LMS. With R/exams, I can maintain a single source, and instructors can convert the quizzes into their preferred format.

Two approaches that are particularly interesting are learnr (RStudio), that uses R/Markdown with shiny, and webex (psyTeachR project), that uses more lightweight R/Markdown with some custom Javascript and CSS. Some first proof-of-concept code is avaiable for a learnr interface (R-Forge) and integrating webex exercises in Markdown documents (StackOverflow Q&A) but more work is needed to turn this into full R/exams interfaces.